- Blog

- Angry birds space trailers

- Icecream screen recorder pro cracked

- Poems about the judgment seat of christ

- Install cellprofiler conda

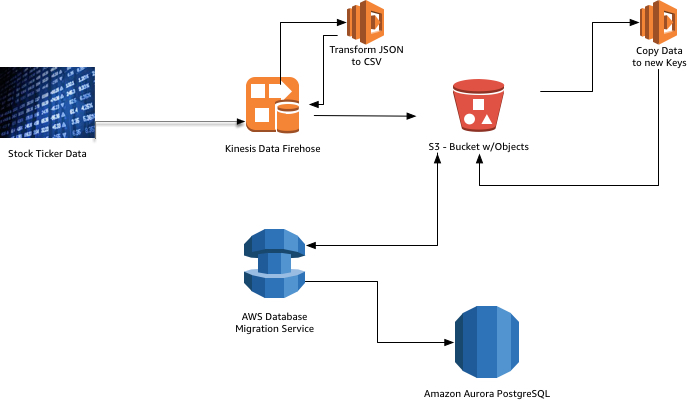

- Aws postgresql s3

- Google hangouts for mac standalone

- Quicken loans address

- Assetto corsa competizione ultimate edition

- Bridge constructor apk

- Atlassian trello

- Ess eye pro sunglasses

- Naruto and blackfire fanfiction

- Notefile widget mac

- Dark souls cinders mod

Step 4 : Create Access key to authenticate from Database Plpgsql | 1.0 | pg_catalog | PL/pgSQL procedural language +-+-+-Īws_commons | 1.0 | public | Common data types across AWS servicesĪws_s3 | 1.0 | public | AWS S3 extension for importing data from S3 Step 3 : Configured AWs_S3 extension in PostgreSQL Please note our staging table comprise of only one TEXT column. >create table staging_table ( col1 text) We will also create a staging table to captured initial load from S3 and later fetch from it to load into final target table “POSTAL_INFO”. Step 2 : Create Table to store Data imported from S3 LOADSAMPLE.txt to s3://awss3samplefile/LOADSAMPLE.txt > aws s3 cp LOADSAMPLE.txt s3://awss3samplefile

AWS POSTGRESQL S3 HOW TO

We can also enable IAM Authentication and use same for above function call.īut still we had not specified how to mapped Information in files with columns/attribute of table, as it is based on positioning.Īs of now, lets try to walkthrough on how to use AWS_S3 extension to load files from S3 bucket. We use below function to load data into tables with no IAM authentication enabled for Database User.Ĭredentials aws_commons._aws_credentials_1 Using aws_s3 extension, we can import files into PostgreSQL tables for further processing. With RDS PostgreSQL, we followed below approach to load data from External source for e.g. With External Tables in Oracle, we can defined Fixed length Character positioning and mapped it with Column or attribute.

AWS POSTGRESQL S3 DOWNLOAD

If needed please get it clone or download same from below repo. State Name ( length :: from 13 to End of line, Position :: 13 – EOL )

AWS POSTGRESQL S3 CODE

State code (length :: 4, Position :: 8 – 12)ģ. Pincode Prefix (length :: 7, Position :: 1 – 7)Ģ. We have three kind of information to incept,ġ. We have used JProfiler(Java Profiling Tool) to analyse the performance of our approaches.As part of one of then requirement on migration from Oracle to RDS PostgreSQL, we needed to support Oracle External Tables functionality to Load/Query data from an file in RDS PostgreSQL.Īll files to be loaded were in Fixed Length format.įor our Reference, lets consider below files comprising of Pincode prefix for all states in India. = Comparison of the 3 approaches on basis of Memory and Time:įor comparison, we have used a dataset of 1.5 million rows and set the maximum memory limit as 4GB for each test. In order to make Spring Data JPA stream to work, the return type of the query in the repository file should be Stream. For Streaming data from PostgresDB till the Application, we can use Spring Data JPA Stream in Java.

In this step, we are Streaming data while receiving it.

Loading everything at onceĪ traditional approach where we bring the result in-memory all at once, prepare a CSV and then upload to AWS using multipart. The above 3 approaches will now be as follows: 1. The PostgresDB is equivalent to the Water tanker, AWS S3 is equivalent to the Backyard Tank and the dataset is equivalent to water.

The above scenario can be compared with how the application pulls data from PostgresDB and uploads to AWS S3.